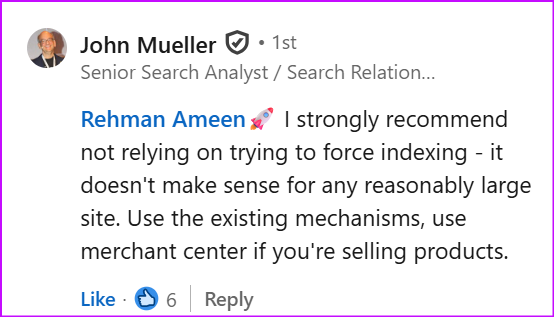

Google’s John Mueller popped up on LinkedIn recently to tell an e-commerce SEO that he “strongly recommends not relying on trying to force indexing”.

The SEO was complaining that the 10-requests-per-day limit in Google Search Console was “very restrictive” for sites managing thousands of products. Another SEO jumped in suggesting workarounds – priority sitemaps, internal linking hubs, all sorts of creative solutions to get more pages indexed faster.

John wasn’t having it. Stop trying to force it, he said. Use the existing mechanisms. Use Merchant Center if you’re selling products. You can see the post below or read the whole discussion on LinkedIn.

And this isn’t just him having a bad day on social media. He said essentially the same thing back in 2020: “If your site relies on manual index submission for normal content, you need to significantly improve your site.“

And Search Engine Round Table points out that again in 2022, John said: “Google refreshes the index of the pages it knows over time automatically. If it’s urgent, you can submit it for reindexing, but that’s extremely rare across the web.”

Six years of Google saying the same thing. Yet people are still moaning about the limit like it’s the problem.

The forced indexing limit is a symptom, not the disease

If you’re hitting that 10-per-day limit regularly, you’ve got bigger issues than Google’s quota.

Google isn’t refusing to index your pages because you haven’t manually submitted them. Google is refusing to index your pages because it doesn’t think they’re worth indexing.

That might be thin product descriptions that say bugger all. Duplicate content across hundreds of variations. Pages that exist simply because someone thought more URLs equals more rankings.

Or it might be a site structure problem. Poor internal linking. Crawl budget disappearing into pages nobody cares about. Technical bollocks that makes it hard for Google to find anything useful.

Manually submitting URLs doesn’t fix any of that. You’re just repeatedly asking Google to look at pages it’s already decided aren’t worth bothering with.

Using ‘request indexing’ occasionally is fine

I’m not saying never touch the request indexing button. If you’ve genuinely updated an important page and want Google to notice faster, go for it. That’s what it’s there for.

The problem is when it becomes your whole indexing strategy. When you’re building systems to submit as many URLs as possible. When you’re annoyed that Google only lets you do 10 a day because you need to do hundreds.

That’s when you need to stop and ask why Google isn’t naturally crawling and indexing your content in the first place.

Fix the actual problem, don’t moan about the limits

If you’ve got thousands of product pages stuck in “Discovered – currently not indexed”, submitting them one by one isn’t the answer.

Look at why Google doesn’t want them. Are they thin? Are they duplicates? Are they buried so deep that Google can barely find them? Are they competing with each other for the same keywords?

Make the pages worth indexing. Make them easy to find. Make them genuinely useful to someone who’s searching.

Google will index content it thinks is valuable. If it’s not indexing yours, that’s feedback – and ignoring it while hammering the submit button isn’t going to change anything.

This is the kind of thing we can cover in an SEO 1:1 – book yours now if you’d like advice on your SEO.