In “crazy SEO news you couldn’t make up”, Cloudflare just launched a feature called “Markdown for Agents” that automatically converts your HTML pages into markdown for AI crawlers. The pitch is that markdown is more “token efficient” for large language models, so serving stripped-down versions of your content will make AI systems more likely to use it.

My thoughts? It’s a fucking ridiculous move from a company that really should know better.

But don’t listen to me – I’m just an SEO freelancer with a big mouth. Listen to the people who matter.

Within days, both Google and Bing have come out against the entire concept of serving different content to bots than you serve to humans. And they haven’t been subtle about it.

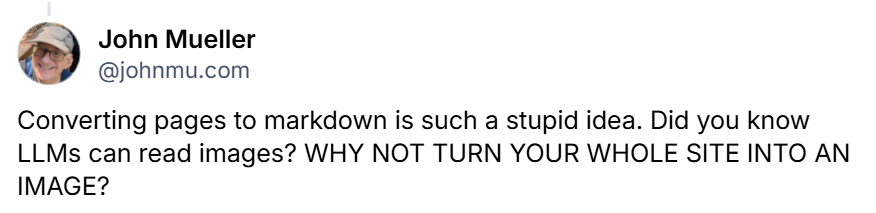

John Mueller from Google called the idea “stupid” on Bluesky. His reasoning is straightforward – LLMs have been reading and parsing normal HTML web pages since the beginning. They don’t need special markdown versions. They can handle your actual website just fine.

Back in November 2025, John also said “In my POV, LLMs have trained on – read & parsed – normal web pages since the beginning, it seems a given that they have no problems dealing with HTML. Why would they want to see a page that no user sees? And, if they check for equivalence, why not use HTML?”

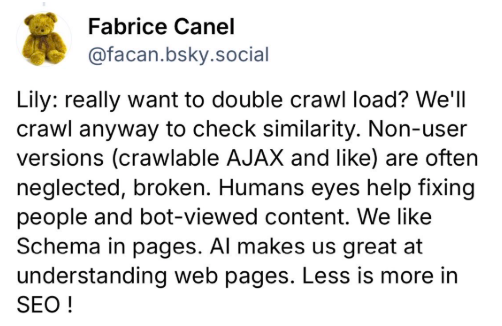

Fabrice Canel from Bing was equally blunt in response to a question from Lily Ray on X, pointing out that serving separate markdown files means double the crawl load. Bing will crawl both versions anyway to check they match. And if they don’t match? You’ve just created a cloaking problem.

Why stripping out HTML structure makes things worse, not better

The whole premise of markdown-for-bots is backwards. HTML isn’t noise that needs filtering out – it’s structure that helps machines understand your content.

Your headings, navigation, layout patterns, and semantic markup all give context to what your page is about. When you flatten everything into plain markdown, you’re removing signals rather than adding them. You’re making interpretation harder, not easier.

Cloudflare’s argument is that markdown saves tokens. A blog post that takes 16,000 tokens in HTML might only take 3,000 in markdown. But this assumes AI systems are struggling with HTML in the first place. They’re not. As John pointed out, they’ve been doing this since the beginning.

And let’s not forget that here’s NO evidence that any of the major AI platforms actually prefer markdown, reward sites that use it, or cite them more often. None at all. It’s a solution looking for a problem.

This is just GEO bollocks in a different outfit

If this sounds familiar, it should. It’s the same pattern I’ve been calling out for months with the whole “Generative Engine Optimisation” circus.

Someone invents a technical-sounding tactic. It gets packaged as essential for AI search. Business owners panic and start implementing it. And six months later, we find out it made no difference – or made things worse.

Danny Sullivan from Google confirmed in January that you shouldn’t be formatting content into “bite-sized chunks” for AI search. His exact words: “We don’t want you to do that.” The same logic applies here. Creating special versions of your pages for bots isn’t clever optimisation. It’s overcomplicating something that doesn’t need complicating. Isn’t the whole SEO sphere complicated enough as it is? Why are we making it worse and more difficult for clients to understand?

The cloaking problem waiting to destroy your rankings

Serving different content to crawlers than you serve to humans has a name in SEO. It’s called cloaking, and Google has been penalising sites for it since the early 2000s.

Now, proponents of markdown-for-bots will argue it’s not really cloaking because the content is “equivalent”. But as Lily Ray pointed out on LinkedIn, this is exactly the kind of grey area that tends to bite people on the arse later down the line.

What happens when your markdown version gets out of sync with your HTML? What happens when you update one but forget the other? What happens when the AI companies decide these separate files are being used for manipulation and start ignoring them entirely?

You’ve now got two versions of every page to maintain, debug, and keep synchronised. You’ve doubled your technical overhead for zero proven benefit.

Glenn Gabe compared it to AMP – Google’s mobile pages initiative that promised ranking benefits and eventually fizzled out. The key difference? AMP at least had clear rewards at the time. Markdown-for-bots has nothing except speculation.

Why clean HTML is all you need right now for AI visibility

Keep your HTML clean and accessible. Make sure your content is available server-side rather than buried behind heavy JavaScript. Use structured data for things that matter. Build a logical site structure with clear headings and internal links.

This is the same advice that’s worked for years. It works for Google. It works for Bing. And yes, it works for AI systems too – because AI systems are pulling from the same search indexes and using the same signals.

You don’t need separate markdown pages. You don’t need special AI-optimised content. You don’t need to pay someone to convert your site into a format that search engines have explicitly said they don’t want.

Just do good SEO. It’s not as exciting as a shiny new technical solution, but it’s what works.

More reading:

- Markdown is the new AMP for AI agents; should you use it?

- Google & Bing: Markdown Files Messy & Causes More Crawl Load

If you want to talk about this or any other aspect of your SEO, grab me on LinkedIn.

Further Reading:

Free on-page SEO Health Check