Google PageSpeed Insights is one of those tools that everyone’s heard of but few people properly understand. You pop your URL in, hold your breath, and then either celebrate or cry depending on what numbers come out.

But it’s entirely possible that those numbers aren’t telling you what you think they’re telling you.

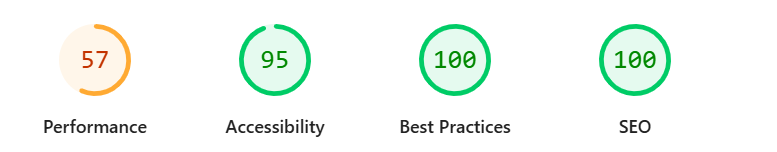

I’ve lost count of how many business owners have shown me their PageSpeed Insights results with either smug satisfaction (“Look, I got 100 on SEO!”) or mild panic (“My performance score is 67, is my website broken?”). Both reactions are usually misplaced.

Let me walk you through what each of those scores means, what they don’t mean, and why obsessing over getting 100/100 is a waste of your time.

What the four PageSpeed Insights scores measure

When you run a test on PageSpeed Insights, you get four scores: Performance, Accessibility, Best Practices, and SEO. Each one is rated out of 100, and each one measures something completely different.

Performance

This is the one most people focus on, and it’s basically measuring how fast your page loads. It looks at things like how quickly something appears on screen, how long before visitors can interact with the page, and whether elements jump around while loading.

Your performance score is calculated from several metrics, with the main ones being Largest Contentful Paint (how quickly the main content loads), Cumulative Layout Shift (whether stuff moves around unexpectedly), and Total Blocking Time (how long the page is frozen while scripts run).

A score of 90+ is considered good. 50-89 needs improvement. Below 50 is poor.

The performance score is often lower on mobile than desktop because the test simulates a mid-range phone on a slower network connection. Don’t panic if your mobile score looks worse than your desktop score – that’s normal. Panic if your score looks bad on either.

Accessibility

This checks whether people with disabilities can use your website. It looks for things like whether your images have alt text, whether there’s enough contrast between text and background colours, whether form fields have labels, and whether the page can be navigated with a keyboard.

A score of 100 doesn’t mean your site is fully accessible – it means you’ve passed the automated checks. Some accessibility issues can only be found through manual testing. But it’s a good starting point.

Best practices

This is a collection of technical checks around security and web development standards. Things like whether you’re using HTTPS, whether your images are the right aspect ratio, whether you’ve got any obvious security vulnerabilities in your JavaScript libraries.

Most websites score fairly well on this without trying too hard.

SEO – and why 100 doesn’t mean your SEO is sorted

This is where people get confused. A lot of people see “SEO: 100” and think their search engine optimisation is perfect. It isn’t.

The SEO score in PageSpeed Insights only checks whether your site can be crawled and indexed by search engines. It’s looking at technical basics like whether you have a title tag, whether your page is set to be indexable, whether your links work, and whether your images have the ability to add alt text.

You could have a page with a title tag, an H1 that says “welcome to my website” and a button saying “call me” – it would pass this test but not perform well in search. The test doesn’t assess your content quality, whether you’re targeting the right search intent, whether your page structure makes sense, or whether anyone would ever want to read what you’ve written.

Think of it this way: a score of 100 on SEO means your website CAN be indexed by Google. It doesn’t mean Google will want to rank it for anything useful.

If your SEO score is below 100, check what’s failing – the report will tell you exactly which checks didn’t pass. But don’t mistake a perfect score for perfect SEO.

Lab data vs field data explained

PageSpeed Insights shows you two types of data, and understanding the difference helps you make sense of what you’re seeing.

Field data (sometimes called “real user data”) comes from actual Chrome users who have visited your site. Google collects this anonymously over a 28-day period. It tells you how your real visitors experience your website.

Lab data comes from an automated test that Google runs when you enter your URL. It simulates a page load in a controlled environment – a mid-tier phone on a mobile network for the mobile test, or a desktop computer on a wired connection for desktop.

Why does this matter? Because you’ll often see different results between the two.

Your field data might show your site is fast for real users, while the lab data shows poor performance. Or vice versa. The lab test is useful for diagnosing specific issues, but the field data tells you what your actual visitors experience.

If your field data shows green scores across the board, your real users are probably having a good experience – even if the lab test looks a bit rough.

Some websites don’t have field data at all. That usually means your site doesn’t get enough traffic for Google to collect meaningful data, or most of your visitors aren’t using Chrome logged into their Google accounts.

Core Web Vitals – the quick version

Core Web Vitals are Google’s way of measuring user experience through three specific metrics. They’re a minor part of Google’s ranking factors, so they’re worth understanding, but not obsessing over.

Largest Contentful Paint (LCP) measures loading speed – specifically, how long before the main content is visible. You want this under 2.5 seconds. If your LCP is slow, it’s usually down to large images, slow server response, or render-blocking resources.

Cumulative Layout Shift (CLS) measures visual stability – whether things move around on the page while it loads. You’ve experienced bad CLS if you’ve ever tried to click a button and the page shifted, making you click something else instead. You want this score below 0.1.

Interaction to Next Paint (INP) measures responsiveness – how quickly the page responds when someone clicks or taps something. (This replaced First Input Delay in 2024.) You want this under 200 milliseconds.

If you pass all three Core Web Vitals with green scores, you’ve met Google’s baseline for user experience. That’s what matters for SEO – not hitting 100 on the overall performance score.

Why chasing 100/100 is pointless

I see business owners lose sleep over not hitting perfect scores on PageSpeed Insights. Please don’t be one of them.

Google doesn’t use the overall Lighthouse score as a ranking factor. John Mueller from Google has said this directly. What Google does use is the Core Web Vitals data from real users. So if your field data shows you’re passing Core Web Vitals, you’re fine – even if your performance score is 72.

A score of 90+ is considered “good” by Google’s own standards. Anything above that is diminishing returns. The effort required to go from 95 to 100 is often massive, involving things like completely restructuring your code or removing useful features.

That time would be better spent on content that serves your customers, building links, or pretty much anything else.

Some of the highest-ranking websites in competitive industries don’t have perfect PageSpeed scores. They’re ranking because they’ve got relevant, helpful content and strong authority – not because they’ve obsessed over shaving 0.3 seconds off their load time.

Focus on passing Core Web Vitals with your real user data. Make sure your site loads reasonably quickly and doesn’t jump around while loading. Then move on to the things that will make a bigger difference to your rankings.

PageSpeed Insights is a useful diagnostic tool. It’s not a report card, and nobody’s going to give you a gold star for hitting 100.

This blog post was written for the members of my Ascend SEO Mentoring for Copywriters Programme – join the waiting list for the next cohort.